ChatGPT Images 2.0's +242 Arena Lead Doesn't Mean You Should Abandon Nano Banana 2 Pro: An Honest Take on What the Leaderboard Actually Measures

OpenAI's ChatGPT Images 2.0 dominates the leaderboard by +242 points, but here's why that number doesn't tell the whole story about which AI image tool you should actually use.

ChatGPT Images 2.0's +242 Arena Lead Doesn't Mean You Should Abandon Nano Banana 2 Pro: An Honest Take on What the Leaderboard Actually Measures

Let me start with something that'll probably get me yelled at in the comments: OpenAI's ChatGPT Images 2.0 topping the Image Arena leaderboard by +242 points over Google's Nano Banana 2 doesn't automatically make it the better tool for your actual work.

Yes, I said it. And before you accuse me of being a Google fanboy or dismiss this as clickbait contrarianism, hear me out. Because what's happening right now in the AI image generation space is a masterclass in how numbers can tell a technically accurate story while missing the entire point.

The Context: OpenAI Just Flexed Hard

In late April 2026, OpenAI dropped ChatGPT Images 2.0 like a mic drop at a rap battle. The specs are genuinely impressive:

Creator Twitter exploded. Design communities started posting side-by-sides. The "Nano Banana is dead" takes started rolling in faster than AI-generated cat videos.

And look, I get it. A +242 point lead sounds devastating. That's not a close race—that's a blowout.

But Here's What the Leaderboard Actually Measures (And What It Doesn't)

The Image Arena leaderboard is basically a popularity contest with a statistical backbone. It measures human preference in blind A/B tests. That's valuable data! But let's talk about what influences those preferences:

1. The "Wow Factor" Beats Practical Utility Every Time

When people are clicking between two images in a blind test, they're not thinking about their actual workflow. They're not considering:

They're just picking the prettier picture. And ChatGPT Images 2.0 is really good at pretty pictures with complex compositions and perfect text rendering. That O-series reasoning? It shows. The images have a compositional sophistication that feels almost unfair.

But here's the thing: most people aren't generating movie posters with Japanese text overlays every day. They're creating product photos, social media content, concept art, and visual brainstorming materials.

2. Speed and Iteration Matter More Than Peak Quality for 90% of Use Cases

I've been testing both models extensively on Soracai's Create page, which uses Nano Banana 2 Pro. Here's what I've noticed:

Nano Banana 2 Pro is fast. Like, refresh-your-coffee-while-it-generates fast. For the standard mode (1 coin), you get solid results in seconds. Even the PRO mode (4 coins) with enhanced detail and color accuracy doesn't make you wait long.

ChatGPT Images 2.0? That reasoning layer adds processing time. It's not slow by traditional standards, but when you're iterating through concepts—trying different compositions, adjusting elements, exploring variations—those extra seconds per generation add up.

Last week, I was creating thumbnails for a YouTube series. I generated probably 40-50 variations in Nano Banana 2 Pro, tweaking prompts, testing different aspect ratios (the 16:9 YouTube ratio is perfect for this), and using the image-to-image feature with reference photos. The whole process took maybe 30 minutes.

With ChatGPT Images 2.0's pricing and generation time? I would've been more conservative with my iterations. And conservative iteration means fewer creative discoveries.

3. The Best Tool Is the One That Fits Your Actual Workflow

Here's where I'm going to get specific, because vague platitudes about "different tools for different jobs" are useless.

Use ChatGPT Images 2.0 when:

Use Nano Banana 2 Pro (like on Soracai's platform) when:

I've been using Nano Banana 2 Pro for my AI Dance videos workflow—generating custom character images, then animating them with Kling 2.6 motion control. The consistency matters more than peak quality here because the video generation step is what people notice.

The Counterargument: "But Shouldn't We Always Use the Best Tool?"

Absolutely! But "best" is contextual, not universal.

The leaderboard measures a specific kind of best-ness: the ability to generate images that humans prefer in isolated comparisons. That's valuable data, but it's not the same as "best for your specific use case and constraints."

It's like saying a Michelin-starred restaurant makes better food than your local taco truck. Technically true by certain metrics, but if you need to feed 20 people for under $100 in 15 minutes, that taco truck is objectively the better choice.

The professional photographers I know don't always shoot in RAW at maximum resolution. Sometimes JPEGs at lower settings are faster to work with and perfectly adequate for the final use. The "technically superior" option isn't always the practically superior option.

What This Means for the AI Image Generation Landscape

Here's my actual hot take: The leaderboard wars are great for pushing technology forward, but terrible for helping normal users make practical decisions.

OpenAI's +242 point lead will push Google to improve Nano Banana. Google's strengths will push OpenAI to optimize speed and cost. This competition benefits everyone.

But if you're abandoning tools that work perfectly well for your needs because a leaderboard number changed, you're optimizing for the wrong metric.

I'm not saying ignore quality differences. I'm saying understand what you're actually measuring and whether it matters for your work. That 99% text rendering accuracy? Genuinely game-changing if you're designing multilingual marketing materials. Completely irrelevant if you're generating background images for video projects.

The Practical Takeaway: Build a Multi-Model Workflow

Here's what I'm actually doing, and what I'd recommend:

For rapid iteration and social content: Nano Banana 2 Pro on Soracai. The speed, cost-effectiveness, and aspect ratio options make it perfect for high-volume creative work. The PRO mode (4 coins) gives you that quality boost when you need it without breaking the bank.

For hero images and complex compositions: ChatGPT Images 2.0. When you need that perfect poster, that flawless text rendering, that compositional sophistication—it's worth the extra time and cost.

For video content: I'm combining Nano Banana 2 Pro for image generation with Soracai's Sora 2 video tools or the AI Dance feature powered by Kling 2.6. The consistency and speed of Nano Banana matter more here because the video generation is the bottleneck anyway.

For trending social content: The Soracai Trends page has effects like the Ghostface filter and action figure creator that are optimized for viral content. These specialized tools often outperform general-purpose models for specific use cases.

Final Thoughts: Stop Chasing Leaderboards, Start Shipping

The AI tools space has a weird obsession with benchmarks and leaderboards. And look, I get why—they provide objective-ish comparisons in a rapidly evolving field.

But the best tool is the one that helps you ship your actual work. Not the one with the highest Arena score. Not the one with the most impressive demo videos. The one that fits your workflow, budget, and creative process.

ChatGPT Images 2.0's +242 point lead is impressive as hell. OpenAI deserves credit for pushing the envelope. But if Nano Banana 2 Pro is generating images that work for your needs in half the time at a fraction of the cost, that leaderboard number is just noise.

Use the right tool for the job. Sometimes that's the leaderboard champion. Sometimes it's the fast, reliable workhorse that doesn't make headlines but gets your work done.

And honestly? Having access to both is probably the actual correct answer.

---

Want to test Nano Banana 2 Pro yourself? Try it free on Soracai's Create page with multiple aspect ratios and image-to-image features. Or explore our AI Dance videos and trending effects to see how different AI models work together for creative projects.

Related Articles

MAI-Image-2-Efficient vs Nano Banana 2 Pro: Microsoft's $5/1M Token Model vs Our 4-Coin System (April 2026 Cost Breakdown)

7 min read

Why Every AI Photographer Will Switch to Nano Banana 2 Pro by 2027: 5 Inevitable Market Shifts Google Just Triggered

9 min read

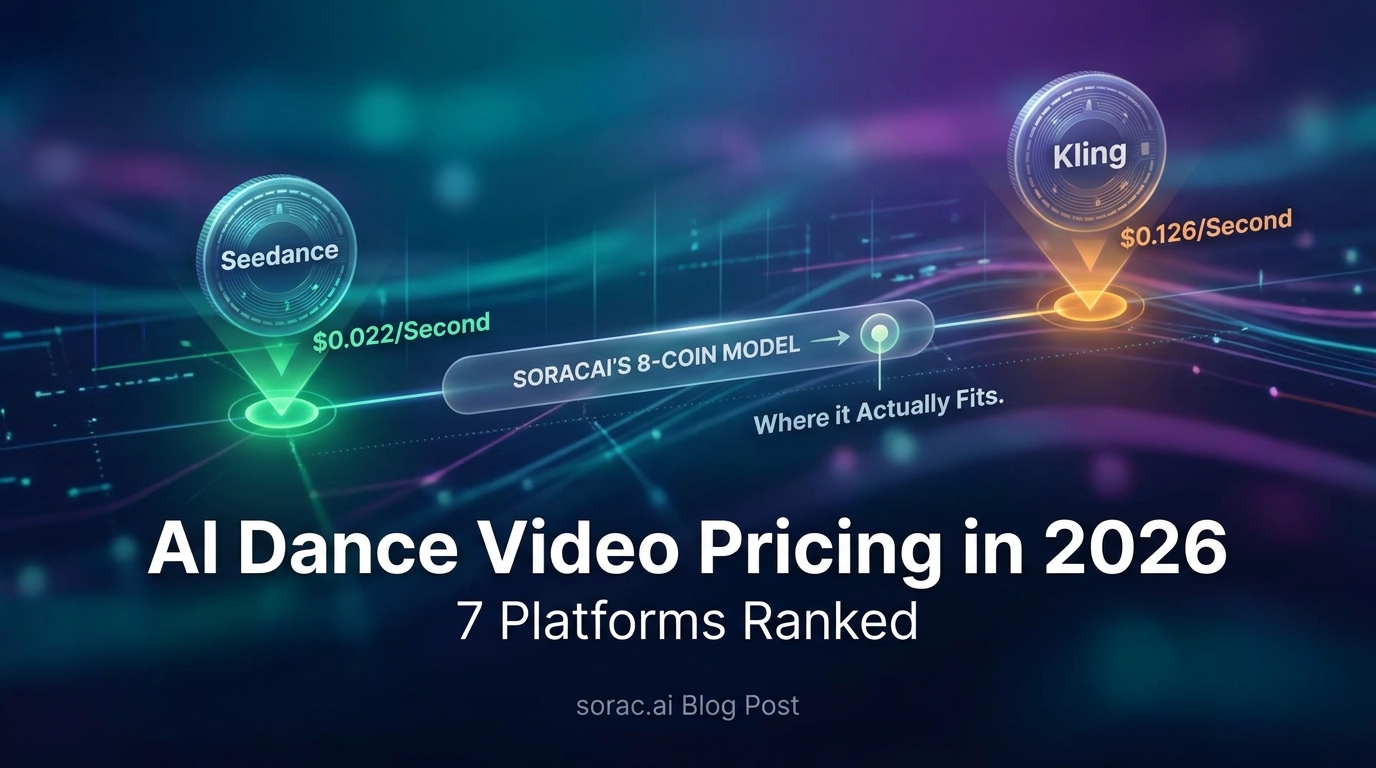

AI Dance Video Pricing in 2026: 7 Platforms Ranked from $0.022/Second (Seedance) to $0.126/Second (Kling) — Where Soracai's 8-Coin Model Actually Fits

7 min read